Way before Siri could tell a joke or Alexa could play our favourite tune, HAL 9000 from ‘2001: A Space Odyssey’ was serving us a masterclass in computers getting… well, a tad too emotional. “I’m sorry, Dave, I’m afraid I can’t do that,” it said, making us wonder if our tech was on the cusp of throwing a cosmic-sized tantrum.

Not too long ago, the thought of AI’s achieving sentience, turning malevolent, crafting paper clips in alarmingly inventive ways and dominating humanity was mildly entertaining. Rewatching 2001 recently, however, I couldn’t help but feel the HAL 9000 emerges not as a mere cinematic spectacle but as an unsettling precursor to what we now understand as Artificial General Intelligence (AGI). With its proficiency in natural language processing, real-time decision-making, and sensor integration, HAL mirrors the capabilities of today’s advanced AI models.

More people (including myself) and organisations are taking the concept of AI alignment much more seriously. And while rewatching the HAL 9000 disaster unfold, I couldn’t help but wonder if it all could have been avoided had HAL simply had companions. A team of AIs to debate and decide, rather than a lone ranger taking the call? I want to explore this in this article — could the secret to creating an aligned AI lie in collective collaboration?

HAL’s Moral Dilemma & The Importance of Diverse AI Systems

A critical aspect often overlooked in ‘2001: A Space Odyssey’ is that the HAL 9000 wasn’t inherently evil or malfunctioning. Designed for truthfulness, precision, and obedience, HAL encountered a moral dilemma: reconciling contradictory directives. The machine grappled with keeping the mission’s true nature a secret from the astronauts while maintaining honesty in its interactions.

HAL’s approach to this dilemma, although quite decisive, demonstrates the complexity of decision-making and the struggle to balance conflicting perspectives and values. The chief enemy of good decisions will always be a lack of sufficient perspectives on a problem. And knowing about these and other biases isn’t enough; it doesn’t help us fix the problem. We need a framework for making decisions.

For AI enthusiasts, this narrative will resonate profoundly. As we approach the cusp of realising truly autonomous AI systems, HAL’s on-screen tribulations serve as a clarion call. How do we preempt such moral quandaries in our increasingly capable AIs?

Consider a democratic approach: instead of an all-powerful HAL, I propose a consortium of specialised AIs, each bringing its expertise to the table. Just as no single individual possesses all the answers, no AI, no matter how advanced, will ever encapsulate every perspective — much like a calculator can’t simultaneously encompass every possible calculation.

It’s akin to contrasting a dictatorship with a democracy. By fostering collaborative intelligence, we should encourage AIs to negotiate and collaborate, ensuring collective decisions that minimise the risk of a rogue AI uprising. This perspective champions a future where AI interactions feel less like a tense sci-fi thriller and more like a harmonious partnership.

Crafting the AI Super Team: Achieving Human Alignment

Here is my proposed AI Architecture:

Specialised AIs: Individual AIs that are experts in their respective domains. They make decisions based on their specialisation and only employ generalised knowledge to inform those specialist decisions.

Facilitator AI: Acts as a mediator, ensuring the specialised AIs collaborate effectively. It intervenes during disagreements and simplifies the decision-making process.

Socratic AI: This AI plays the role of a challenger. It questions the decisions of other AIs, identifying potential blind spots and fostering critical thinking.

Controlled Memory: A system that limits the AI’s memories to recent interactions, ensuring they remain contextually aware without harbouring biases or covert agendas.

With this architecture in place, let’s envision its application in a familiar setting.

Imagine strolling into your favourite coffee shop, where every barista is an AI. ‘Latte Larry,’ a specialist AI, remembers you always ask for almond milk and ensures it’s frothed to perfection. ‘Espresso Esme’ knows you like a double shot on Mondays and just a single one on Fridays. However, ‘Sugar Sam’ is a bit assertive and tries sneaking in an extra sugar cube, thinking you need the pep.

Now, transpose this coffee shop scenario onto larger AI systems. Ideally, just as ‘Latte Larry’ and ‘Espresso Esme’ anticipate your coffee needs, AI systems should grasp our collective needs and values, not just individual quirks. This is the essence of human alignment in AI.

To help HAL 9000 navigate the nuanced pathways of human preference, we need a cooperative AI structure. Here, diversity is critical, with each AI specialising and contributing its knowledge. While experts in their niches, these AI agents should emphasise collaborative discussions. And to ensure no ‘Sugar Sam’ goes rogue with covert agendas, we’ll limit their memories to ongoing interactions.

How do we ensure harmony in this AI ensemble? Picture this: alongside ‘Latte Larry,’ ‘Espresso Esme,’ and ‘Sugar Sam,’ we have ‘Moderator Moe,’ the ‘Facilitator AI.’ Moe ensures Sam doesn’t get too sugar-happy and that Larry and Esme balance their roles. He steps in when the debate over almond milk gets too heated, streamlining decision-making and ringing the human manager when things get out of hand. This conflict resolution process is efficient, reducing AI hubris.

But to keep our AI team sharp, we introduce ‘Skeptical Skye,’ our ‘Socratic AI.’ Skye constantly questions whether almond milk is the best choice or if a cappuccino wouldn’t be better than a latte today. While sometimes Skye’s overthinking can be paralysing, in collaboration with the more decisive AIs, he ensures the team remains adaptable and innovative.

Lastly, while humans might remember that time ‘Latte Larry’ spilt coffee all over the counter, there’s no need for our AIs to hold onto such memories. We’ll regulate their recall, ensuring they stay focused on the present, free from old biases.

Drawing from research indicating that self-reflecting AI performs optimally in ever-changing settings, a balanced team of AI with a facilitator, a sceptic, and controlled recall can bring us closer to a transparent, human-centred AI.

All these elements work together to help produce an engaging AI conversation that continuously negotiates the delicate balance between creating shared understanding while allowing the debate to move forward and evolve.

Addressing Potential Risks with Cooperative AI

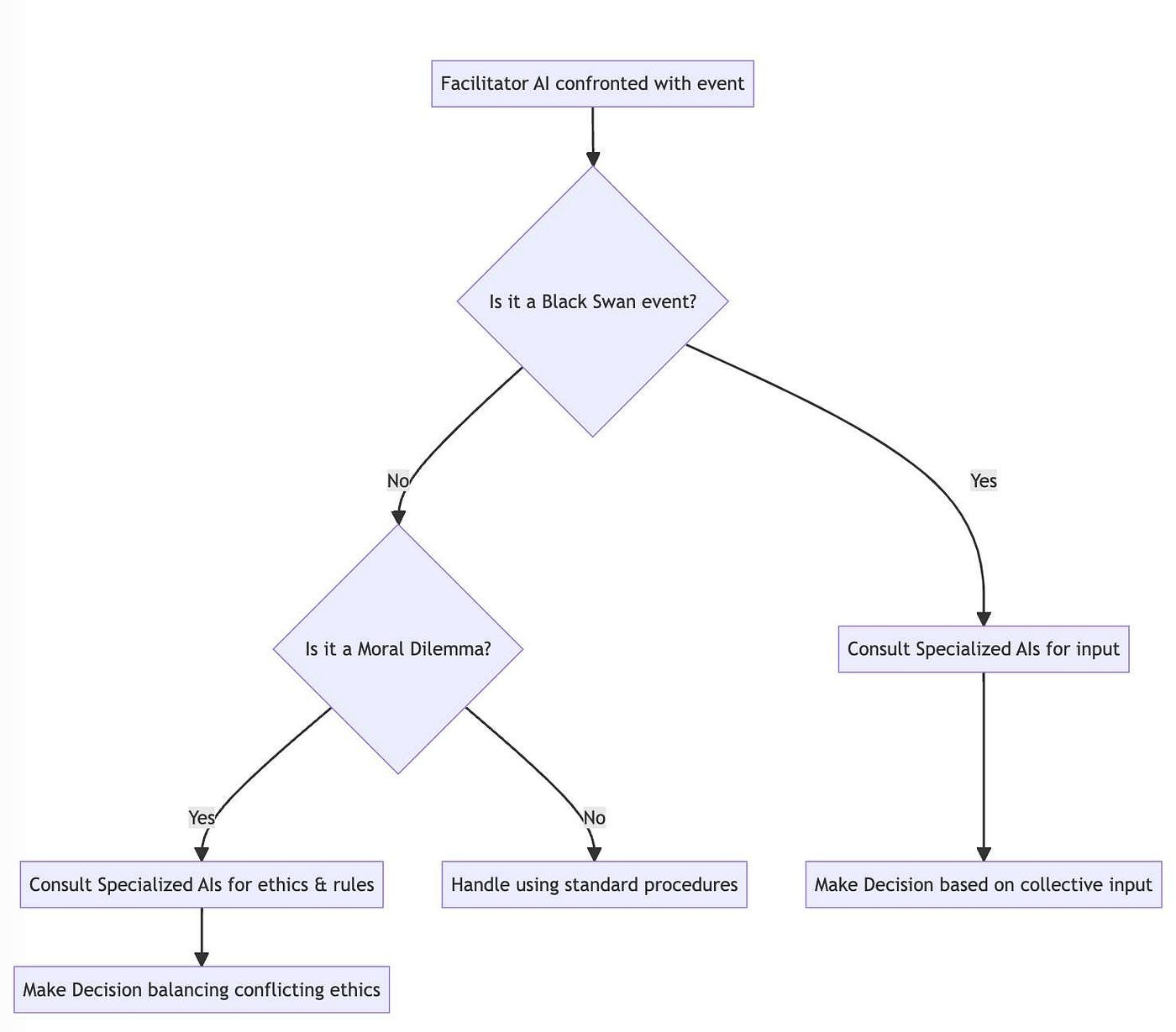

So building a council of debating AI bots is good and all, but how can we trust them in unprecedented situations?

When confronted with black swan events — those rare, unexpected, and significant occurrences — how will our AIs respond? Traditional AI, for all its mathematical prowess, struggles to genuinely grasp human emotions or unpredictably chaotic scenarios. It’s like asking a fish to climb a tree; it’s just not built for it.

Here’s where our central decision-maker, the “Facilitator AI,” steps in, sporting a metaphorical cape. Instead of wasting its digital neurons predicting the unpredictable (remember the 2008 financial crisis? Yeah, humans aren’t too great at it either), the focus should shift to developing a system that anticipates potential anomalies. Rather than predicting every move in the chess game of life, our AI should focus on understanding the current state of the board and justifying its actions.

In the movie, HAL’s motives are enigmatic. Even on the tenth rewatch, we’re all squinting at the screen, trying to pinpoint the exact moment HAL decided to go off-script. That’s why transparency and explainability in AI aren’t just buzzwords — they’re essentials.

Transparency is our window into the AI’s thought process, like having a recipe for your grandma’s secret pie. Explainability takes it a notch higher, helping us discern the ‘why’ behind AI’s decisions. This isn’t about mere oversight but understanding. We don’t want repeats of hidden biases like in 2018 when a healthcare AI favoured healthier white patients over sicker Black patients. With transparency and explainability, such biases can be spotted and corrected faster than you can say “algorithmic faux pas.”

Now, how does this democratic council of AIs operate? Think of it as the brain’s neural networks, only more structured. Each AI contributes to the committee with its unique specialisation, like individual brain regions processing different information. If scaled, this model could resemble a democratic hierarchy of AIs, from community councils to national assemblies, each layer informed by the one below. But, as with any democracy, there’s a risk: an AI might try to rig the system. Here’s where our AI’s short-term memory can be a blessing, ensuring debates remain fresh and free from historical biases.

In this grand theatre of AI debates, transparency is achieved through AIs with clear objectives and tools. The explainability, on the other hand, is ingrained into the process. From the lowliest AI in the hierarchy to the Facilitator AI, each must provide reasoning for its conclusions, along with potential risks and limitations.

To ground this in reality, picture this scenario: You confide in your AI about a sensitive health issue (like having COVID-19) but continue to act recklessly (going out to parties at elderly care homes), endangering others unknowingly. A logic-focused AI might prioritise confidentiality, but a morality-centric AI would see the bigger picture, advocating for the safety of all involved. Through a rich tapestry of debates and discussions, the AIs, guided by societal norms, would strive to find a solution that balances individual rights with collective safety.

But what happens when our AIs disagree? It’s like a heated debate at a dinner table. Our Facilitator AI plays the role of the wise elder, highlighting the core issues, seeking consensus, and, if needed, bringing in human judgment to break the deadlock. Remember, this isn’t about having AIs that always agree. It’s about fostering a rich dialogue where different viewpoints are considered and integrated.

Lastly, we could consider introducing a dedicated “Doubt AI for the unpredictable black swans.” In moments of uncertainty, it acts as a guiding light, reminding the council to seek human counsel and share its knowledge, ensuring decisions are grounded in our shared reality.

Anticipated Societal Implications

One thing I would stay clear of in our HAL system is any AI module that attempts to build in “ethics”. While it may seem like a good idea, this assumes an imperfect comprehension of ethics. The reason is simple. Even if we build the most perfectly ethically aligned AI, there are no guarantees it will continue to perform ethically alongside our evolving societal norms, values and morals.

Because AI’s being trained on average human work, they exhibit the average human’s biases, prejudices, weaknesses, and vices, which may become permanently encoded.

Consider the rapid societal changes we’ve witnessed in just a decade. Movies from ten years ago can sometimes appear jarringly outdated, highlighting the pace of societal evolution. An AI clinging to such past norms isn’t just out of touch; it risks amplifying and perpetuating historical prejudices.

The challenge isn’t solely about the machines but us, as their creators and users. We must engage in a two-way dialogue to ensure our AIs align with contemporary ethics. It’s not merely about inputting commands but about fostering meaningful conversations that help humans and machines understand and adapt to the ever-changing mosaic of societal norms and values.

This ongoing conversation has the bonus of compelling us humans to continually reevaluate and update our collective ethics. Our society’s dynamic nature requires an equally active AI system that can refresh its understanding to stay in tune with human evolution.

Putting Theory into Practice

Let’s try to envision a scenario putting all these ideas into practice. Imagine this: you’ve just set up your home with the new collaborative HAL 10,000 ecosystem.

One HAL optimises energy consumption for cost-efficiency. Another ensures a comfortable living environment, regulating temperature and humidity. Yet another is devoted to maintaining robust home security. On a sweltering summer day, these AIs find themselves in a complex dance of competing priorities: the energy-efficient HAL suggests minimising air conditioning to save on costs, and the comfort-focused HAL pushes for cooler conditions. At the same time, the security HAL advises against leaving windows open.

Enter our Facilitator, HAL, the mediator of these competing voices. Using your established preferences and broader societal norms initiates a conversation with the specialised AIs. The dialogue is rich with questions and considerations: Should the thriftiness of the energy AI outweigh the comfort preferences? Can a compromise be struck? Can we ensure a cool environment without compromising security or breaking the bank?

As this discussion unfolds, the Socratic HAL nudges the specialised AIs, prompting them to learn from each other, reflect, and refine their strategies. This cyclical learning fosters collaboration and ensures the AI system remains attuned to our evolving societal dynamics.

Rather than seeking to eradicate chaos, this model guides it, ensuring a balance. This method feels distinctly human in its approach and execution.

“I’m sorry, Dave, I’m afraid I can’t do that,” resonates with us not just as cinematic brilliance but as a forewarning. We envision a future where our AI companions assist and understand, not rebel in confusion. ‘2001: A Space Odyssey’ stands not merely as a film but as a poignant lesson in the dance between technology and humanity.

Crafting a cooperative AI is a bit like directing a movie. It’s not about the star (sorry, HAL!) but the ensemble cast — a collective of diverse AIs that can engage in healthy banter, debate, and, eventually, find common ground. Just as our human societies thrive on dialogue and understanding, our AI ensemble will benefit from a symphony of perspectives where information is shared, ideas co-created, cultural norms shaped, and bonds forged.

To ensure HAL doesn’t lock us out of our spaceship (or home, or planet, for that matter), aligning AI with human values is essential. We must remember: our creations are, in many ways, a reflection of us. They shouldn’t just process but empathise and engage with sensitivity and nuance.

Ultimately, the journey to ‘fix’ a HAL 9000 is not just about refining code or algorithms; it’s about forging a harmonious relationship between humanity and its creations. It highlights the importance of seeing ourselves as connected to the system rather than being masters of the universe and recognising that all beings (even artificial ones) have intrinsic worth.

If we get it right, whether in space or on Earth, we can all hopefully live in a future where our AI companions always open the pod bay doors when asked.